How drones and AI are mapping deadwood in Finnish forests

A quarter of all flora and fauna in Finnish forests depend on deadwood and decaying wood, which are the most important components for the biodiversity of boreal forests. Among these species are many included in the Red List of the IUCN. Yet, despite their ecological importance, deadwood resources remain severely under-documented in Finland. Traditional forest inventories often overlook deadwood or provide only large-scale estimates, leaving a critical gap in conservation efforts.

A new study, published in Remote Sensing of Environment and conducted in Finland’s Hiidenportti National Park and Evo, is exploring pathways of enhancing deadwood mapping. Authors of the publication, supported by OBSGESSION, have turned to Unoccupied Aerial Vehicles (UAVs), equipped with high-resolution cameras, to map deadwood with precision. While commercial satellites and aerial photography offer a ground sampling distance of 30 cm, UAVs can achieve resolutions finer than 5 cm, making them ideal for detecting small objects like fallen deadwood.

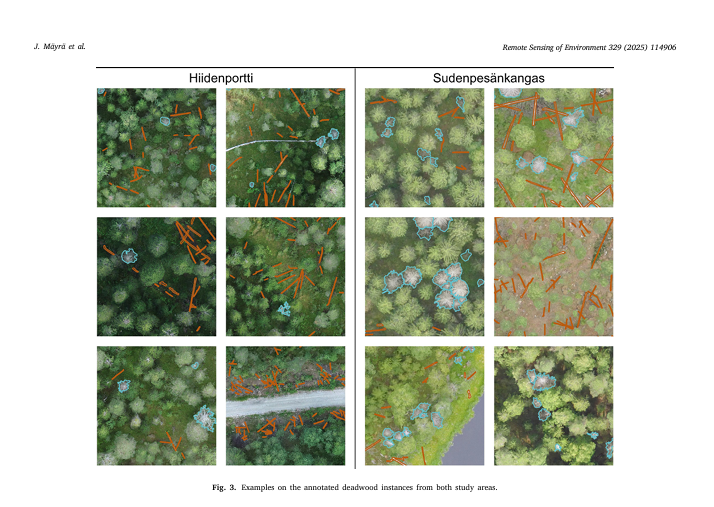

In the publication titled “Using UAV images and deep learning to enhance the mapping of deadwood in boreal forests," the authors describe the utilisation of YOLOv8 by Ultralytics for detecting and segmenting standing and fallen deadwood instances from RGB UAV imagery. The research spans over two geographically distinct study areas in Finland, Hiidenportti National Park and Evo, where approximately 13,800 deadwood instances were manually annotated to be used as the training and validation data for the instance segmentation models. These annotations were also compared to field-measured deadwood data from Hiidenportti to assess the extent to which the deadwood can even be seen from UAV imagery.

In this study, authors, among whom are OBSGESSION’s very own Janne Mäyrä and Petteri Vihervaara, approached the detection of deadwood through high-resolution drone images that could potentially serve as reference material for large-scale deadwood detection. We first defined the accuracy that can be reached with the visual interpretation of high-resolution RGB images. The correctness of manually annotated deadwood objects was evaluated against field-measured reference data. In the second phase, we trained instance segmentation models for automatic interpretation of the images. With this setup, the aim was to answer the following questions:

1. How much of the fallen and standing deadwood is it possible to detect from above using optical UAV imagery?

2. How does canopy cover affect the visibility of fallen deadwood?

3. To what extent can visible deadwood be detected with YOLOv8?

4. How does the composition of training data affect the performance of the instance segmentation model in the context of deadwood detection?